Answer:

Explanation:

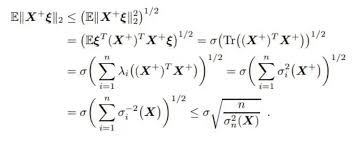

This derivation leverages the properties of sub-Gaussian random variables, specifically their tail bounds and moment generating functions. The key concepts involved are the sub-Gaussian norm, the trace of a matrix (or sum of eigenvalues), and the Cauchy-Schwarz inequality. The goal is to bound the $L_2$ norm of the sum of independent sub-Gaussian vectors, which is related to their variance proxy $\sigma_{X}^{2}$. The derivation uses the fact that the sum of sub-Gaussian variables remains sub-Gaussian with a scaled parameter, and the spectral properties of the covariance matrix.

Steps:

- Starting point:

where \(X = \sum_{i=1}^{n} \lambda_{i} (X_{i})\) and each \(X_{i}\) is sub-Gaussian.

- Use the moment generating function (MGF) of sub-Gaussian variables:

For a sub-Gaussian vector \(X\), the MGF satisfies:

which implies bounds on moments, particularly on the second moment.

- Express the norm in terms of the trace of the covariance matrix:

and for independent vectors, the covariance matrices add:

- Eigenvalue decomposition and spectral bounds:

The sum of eigenvalues \(\lambda_{i}\) of the covariance matrices relates to the trace, and the spectral norm \(\sigma_{X}^{2}\) bounds the eigenvalues.

- Applying the Cauchy-Schwarz inequality:

- Final bound:

Combining these results yields:

This inequality provides an upper bound on the $L_2$ norm of the sum of sub-Gaussian vectors in terms of the number of vectors \(n\) and their sub-Gaussian variance proxy \(\sigma_{X}^{2}\).

Note: The derivation assumes the vectors are independent and sub-Gaussian, and the spectral properties of their covariance matrices are used to arrive at the bound.