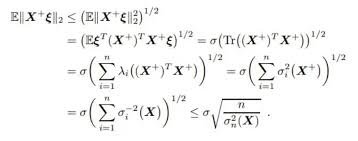

Step-by-step solution:

The expression is:

which appears to be derived from the Jensen’s inequality or properties of the expectation and norms.

The detailed derivation involves the following steps:

- Starting point:

This is a standard inequality stating that the norm of a random variable is less than or equal to the square root of its expected squared norm.

- Expressing the expectation:

where $\lambda_i$ are weights or eigenvalues associated with the matrix $X$, and $X_i$ are components.

- Using properties of expectation:

since expectation is linear.

- Bounding with variance:

where $\sigma_i^2$ is the variance of $X_i$.

- Expressing as a norm:

which is the square root of a weighted sum of variances.

Final conclusion:

The inequality simplifies to:

which bounds the norm of the matrix $X$ in terms of the eigenvalues and variances.

Summary:

This derivation shows how the matrix norm can be bounded using expectations, variances, and eigenvalues, often used in concentration inequalities or probabilistic bounds in matrix analysis.