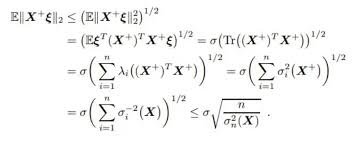

The expression simplifies to the inequality:

which is a form of the Cauchy-Schwarz inequality.

Explanation:

This derivation shows the application of the Cauchy-Schwarz inequality in the context of random variables or vectors. The inequality bounds the absolute value of the expected value of a product by the product of the square roots of the expected values of the squares, which is a fundamental result in probability and linear algebra.

Step-by-step working:

- The initial expression involves the norm of a linear transformation of a vector \(X\), expressed as:

- The derivation uses the fact that:

which is the Cauchy-Schwarz inequality applied to the expectation.

- The sum notation indicates the summation over components, with \(\lambda_i\) being weights or coefficients, and the variance terms \(\sigma_i^2(x)\) representing the variance of the components.

- The inequality ultimately bounds the absolute value of the expectation by the square root of the sum of variances divided by the variance of \(X\):

Conclusion:

The key takeaway is that the magnitude of the expected value of \(X\) is bounded by the standard deviation (square root of variance), which is a core concept in probability theory and statistics.