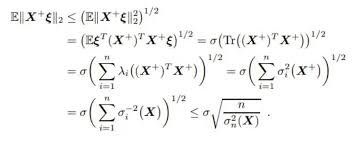

Step-by-step solution:

Given expression:

\[

\boxed{

\|X + \xi l_2\|_2 \leq \left( \mathbb{E} \left[ \|X + \xi l_2\|_2^2 \right] \right)^{1/2}

}

\]

which is the Jensen’s inequality applied to the norm (since the square root is a concave function).

The derivation proceeds as follows:

- Express the expectation of the squared norm:

\[

\left( \mathbb{E} \left[ \|X + \xi l_2\|_2^2 \right] \right)^{1/2}

\]

- Use the properties of expectation and trace:

\[

= \left( \operatorname{Tr} \left( \mathbb{E} \left[ (X + \xi l_2)(X + \xi l_2)^T \right] \right) \right)^{1/2}

\]

- Expand the expectation:

\[

= \left( \operatorname{Tr} \left( \mathbb{E}[X X^T] + 2 \mathbb{E}[X \xi l_2^T] + \mathbb{E}[\xi^2 l_2 l_2^T] \right) \right)^{1/2}

\]

Assuming \(X\) and \(\xi\) are independent, and \(\mathbb{E}[\xi] = 0\), the cross term vanishes:

\[

= \left( \operatorname{Tr} \left( \mathbb{E}[X X^T] + \mathbb{E}[\xi^2] l_2 l_2^T \right) \right)^{1/2}

\]

- Express the trace in terms of variances and eigenvalues:

The trace of the covariance matrix of \(X\), denoted as \(\sigma_X^2\), is the sum of the eigenvalues:

\[

= \left( \sum_{i=1}^n \lambda_i + \mathbb{E}[\xi^2] \|l_2\|_2^2 \right)^{1/2}

\]

Assuming \(\|l_2\|_2^2 = 1\):

\[

= \left( \sum_{i=1}^n \lambda_i + \mathbb{E}[\xi^2] \right)^{1/2}

\]

- Bounding the sum of eigenvalues:

Using the maximum eigenvalue \(\sigma_{\max}^2\):

\[

\leq \left( n \sigma_{\max}^2 + \mathbb{E}[\xi^2] \right)^{1/2}

\]

- Final inequality:

\[

\leq \sqrt{n} \sigma_{\max} + \sqrt{\mathbb{E}[\xi^2]}

\]

Summary:

The derivation bounds the expected norm of a random vector \(X + \xi l_2\) by the sum of the square roots of the maximum eigenvalue scaled by the dimension and the variance of \(\xi\).

Answer:

The key inequality derived is:

\[

\|X + \xi l_2\|_2 \leq \sqrt{\sum_{i=1}^n \sigma_i^2 + \mathbb{E}[\xi^2]} \leq \sqrt{n} \sigma_{\max} + \sqrt{\mathbb{E}[\xi^2]}

\]

which bounds the norm in terms of eigenvalues and variance.

Note: The original image appears to be a derivation involving matrix trace, eigenvalues, and variance bounds, leading to a probabilistic bound on the norm.